NEWSLETTER

Four years later, a court letter explained the 1:50am rejection.

May 12, 2026

NEWSLETTER

May 12, 2026

He applied to more than 100 jobs this way. He was rejected every time.

Mobley is African American, over forty, and has a documented disability. He had been sending applications into companies that use a platform called Workday to run their hiring. Workday runs cloud-based HR and finance systems for thousands of organizations globally, including many Fortune 500 companies across sectors like banking, insurance, technology, and retail. Most people applying through it assume what anyone would assume: a human being is eventually going to read what they sent.

That is not what Mobley alleges was happening. According to the complaint, before any recruiter opened a file, Workday's algorithmic screening tools, including its HiredScore AI features, scored applicants and allowed employers to filter out low-scoring candidates before any human review, so that many rejections were effectively made by a machine at the screening stage. Applicants who ranked too low never surfaced on a recruiter's screen. They were not told this. The companies using the platform often did not know the full criteria driving the scores either. The system operated in the space between what the vendor believed the employer owned and what the employer believed the vendor managed, and in that space, the question of who was accountable for what the system decided had never been answered by anyone.

On February 21, 2023, Mobley filed a federal lawsuit. The case is Mobley v. Workday, Inc.,Case No. 3:23-cv-00770-RFL, U.S. District Court for the Northern District of California, before Judge Rita F. Lin. He alleged that Workday's AI screening tools discriminated against him based on his race, his age, and his disability. The court allowed his core discrimination claims to proceed.

The case escalated methodically.On May 16, 2025, Judge Lin granted preliminary certificationof the case as a collective action, meaning that others who had been subject to the same policy could formally join. [2025 WL 1424347] On July 7, 2025, the court expanded the scope, ruling that the collective specifically included applicants whose applications had been scored, ranked, or screened using Workday's HiredScore AI features, and rejecting Workday's argument that HiredScore was a separate product with different legal exposure. [Civil Rights Litigation Clearinghouse, December 17, 2025] One man's complaint had become a case with the potential to reach a vast population of applicants who had never heard of Derek Mobley.

Then came the notice.

On February 17, 2026, the court authorized formal written notificationto every person who had applied for a job through Workday's platform since September 24, 2020, and was forty years old or older when they applied. The notice informed them, many for the first time, that an AI system may have scored their application before any human looked at it, that a lawsuit alleged this constituted unlawful age discrimination, and that they had until March 7, 2026 to opt into the case and preserve their right to participate in any legal outcome. [Wiggins Childs, February 17, 2026]

Think about who received that letter. Someone who applied for jobs in late 2021, was rejected within hours each time, assumed they were not a fit, and moved on. Four years later, a court letter arrived telling them a machine may have sorted them out before anyone saw their name. For the people who opted in by March 7, 2026, the case continues. For the people who missed the deadline, or never received the letter, or could not understand what it was asking, the right to participate is gone.

The machine filtered him once at 1:50 in the morning. The paperwork filtered them again in the daylight, four years later.

Somewhere, at one of those companies, a procurement manager signed the Workday renewal in 2022. Legal reviewed the contract. Finance approved the budget.Nobody in either room asked which hiring decisions the software was authorized to make independently, before the HR team arrived the next morning, while the applicants were still asleep.That question was not on the form. It has never been on the form.

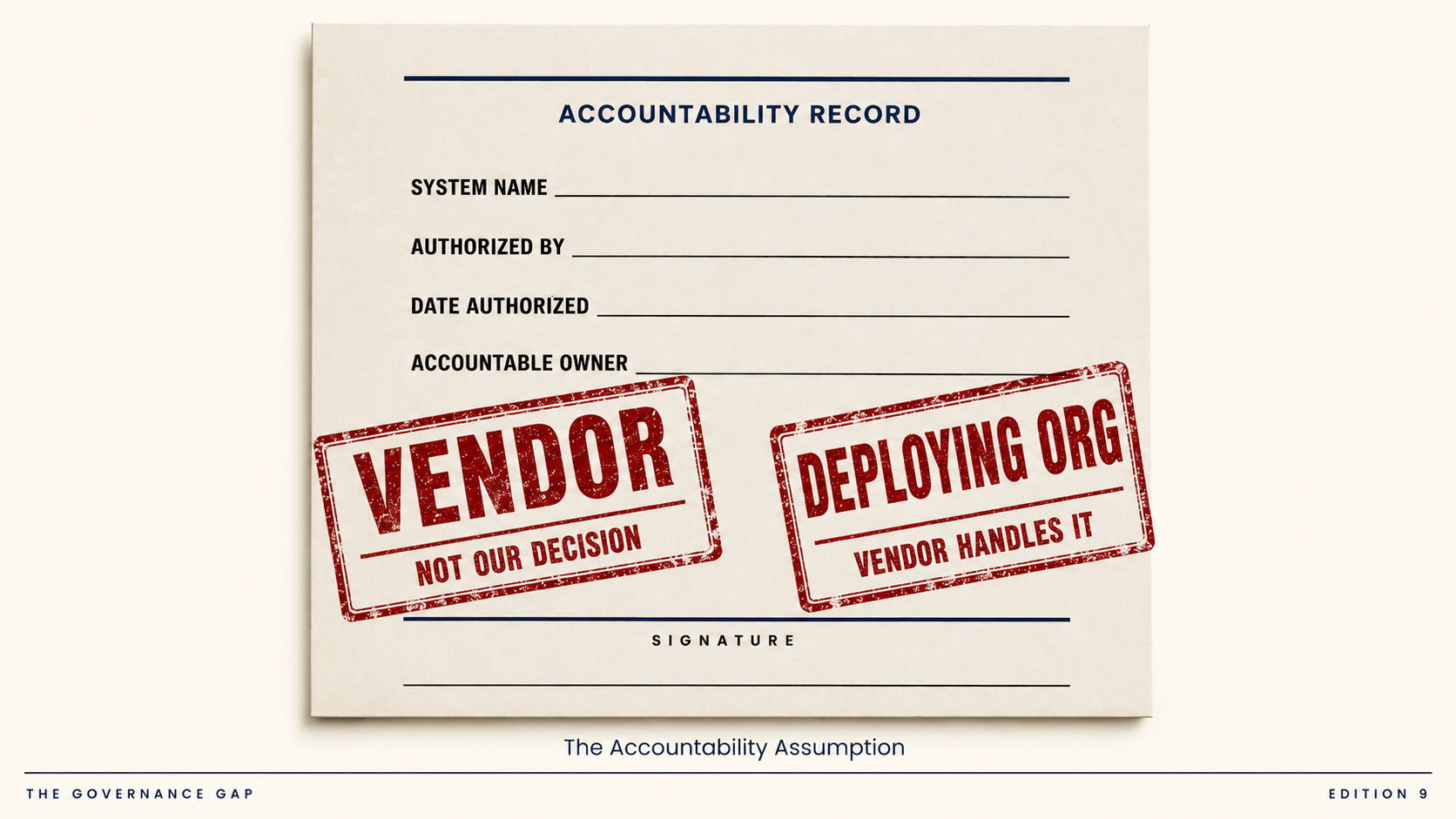

Bias is what the courts will determine. The governance failure existed before the first score was ever run. Every company that deployed Workday's screening tools had put into production a system that made consequential decisions about real people, and no named person inside those organizations had written down, before it went live, what the system was authorized to decide. There was a vendor contract. There was a procurement approval. There was no document stating: this system exists, this is what it is permitted to influence, this named person is accountable for what it produces.

Throughout the litigation, Workday has argued that it merely provides the technology and that its customers, not Workday, make the final hiring decisions. On the customer side, many employers treated Workday's tools as handling the screening, even as they saw themselves as making the ultimate hiring decision. Both positions are sincerely held. Together they describe a gap that has a name.

The Accountability Assumptionis the belief, operating inside almost every organization that licenses an AI tool, that accountability for what the tool decides lives somewhere else. With the vendor. With the platform. With whoever signed the contract. The belief does not transfer the obligation. It leaves the obligation sitting unclaimed in the middle, for months or years, until a court authorizes notice to everyone forty or older who applied for a job through Workday's platform since September 24, 2020 and is hearing, for the first time, what was happening to their application in the middle of the night.

For more than a decade, banking regulators have required supervised institutions to maintain a formal inventory of models, validate them independently, and assign a named model owner accountable for their use before they go into production.

Federal Reserve SR 11-7andOCC 2011-12, published in 2011 and updated by new interagency guidance in April 2026, established this as the baseline standard for any algorithm making a consequential decision inside a regulated bank. The question for 2026 is whether the same discipline applied to credit and risk models inside those institutions is being applied with the same rigor to the AI tools the HR team licensed from a marketplace last spring.

Microsoft Purview maps and classifies the data your AI systems can access, giving you visibility into where sensitive information lives and how it flows.Agent 365, generally available since May 1, 2026, gives you a control plane to discover, observe, and govern AI agents across your organization's Microsoft environment. Neither tool can surface a system that was never declared before it ran. They govern what has been registered. The Workday case is the shape of what they cannot see.

Before any AI system in your organization touches a hiring decision, a credit review, a claims determination, or a fraud flag, it must be declared.Four things, in writing, before it runs:1. The system's name and vendor 2. The specific decisions it is authorized to influence 3. A named accountable owner inside your organization 4. The date that authorization was granted.

That record separates a deployment from a liability. Without it, the Accountability Assumption fills the space quietly, until someone who received a rejection at 1:50 in the morning finally decides they deserve to know why.

In your organization, which AI systems are making consequential decisions right now that no named person formally authorized before they went live?