NEWSLETTER

An AI Model Ran for Six Months Before Anyone Asked What It Was Actually Doing.

May 5, 2026

NEWSLETTER

May 5, 2026

On November 4, 2025, Upstart Holdings disclosed on its Q3 earnings call that Model 22 had become overly conservative during the quarter, reducing borrower approvals and conversion rates. Co-founder and CTO Paul Gu acknowledged on that call that executives had been"knowingly making a choice with our model to be a little bit more conservative on the credit side in earlier parts of the quarter,"and that "there's always some kind of sampling and measurement error." CEO Dave Girouard added that the model had been "maybe overreacting" to macroeconomic signals.

Full-year revenue guidance was revised from approximately $1.055 billion to $1.035 billion, a reduction of approximately $20 million. The stock fell $4.49 per share, or 9.71%, closing at $41.75 on November 5, 2025. Securities class actions were filed in federal courts in April 2026, alleging that Upstart and four of its executives made materially false and misleading statements about Model 22 throughout the period from May 14, 2025 to November 4, 2025. Whether those disclosures constituted securities fraud is a question the courts will answer.

The governance question underneath it is not going to wait for the verdict.

Go back to May 14, 2025. Upstart held its inaugural AI Day investor event in New York City. Model 22 was presented as enabling lenders to approve more borrowers at lower rates. The company raised full-year revenue guidance multiple times during the months that followed, attributing the improved outlook directly to Model 22's performance. The executives left that AI Day with a publicly documented organizational position: this model does this thing, and it is producing results in that direction.

By Q3, according to the earnings call disclosures and the complaint allegations, the model had shifted in a direction that ran counter to that framing. The question the public record cannot answer from the outside is whether the governance structure had any mechanism for comparing what the model was actually doing against what the May documentation had said it would do. That comparison, if it existed, has not appeared in what has been disclosed. An owner for that comparison, a cadence for running it, a trigger condition that would have required it to run before November, none of these have emerged in the public record.

There is a form for nearly every part of this process. There is a form for the vendor assessment, the security review, and the data access request. There is a form for the post-implementation review that gets scheduled sixty days after go-live, postponed to ninety, and eventually written from whatever the team can reconstruct at day one-twenty while everyone has moved to the next initiative. The form that would have required a named person to compare what Model 22 was producing each quarter against the organizational purpose that authorized its deployment is the form that does not appear anywhere in the story. Creating it had not made it onto the deployment checklist, because that kind of accountability requirement tends to appear in governance documentation only after the question arrives from a direction the organization did not choose.

The governance function learned in November what the model had been doing since May. The mechanism that surfaced the information was a revenue miss.

Here is what makes this harder to look away from.

By November 4, the company's own CTO and CEO were disclosing on a live earnings call that the model had been behaving differently from what the May investor presentation described, and that the conservative calibration had been a knowing choice made earlier in the quarter. The behavioral change was not invisible inside the organization. The data existed. The observation existed.

What the organization had not built, based on everything in the public record, was a formal obligation requiring anyone to compare what was internally observed against what had been publicly documented as the system's intent. The gap between those two things had no owner. It had no cadence. It had no trigger condition that would have closed it before a quarterly earnings call closed it instead.

The model was running. The comparison was not.

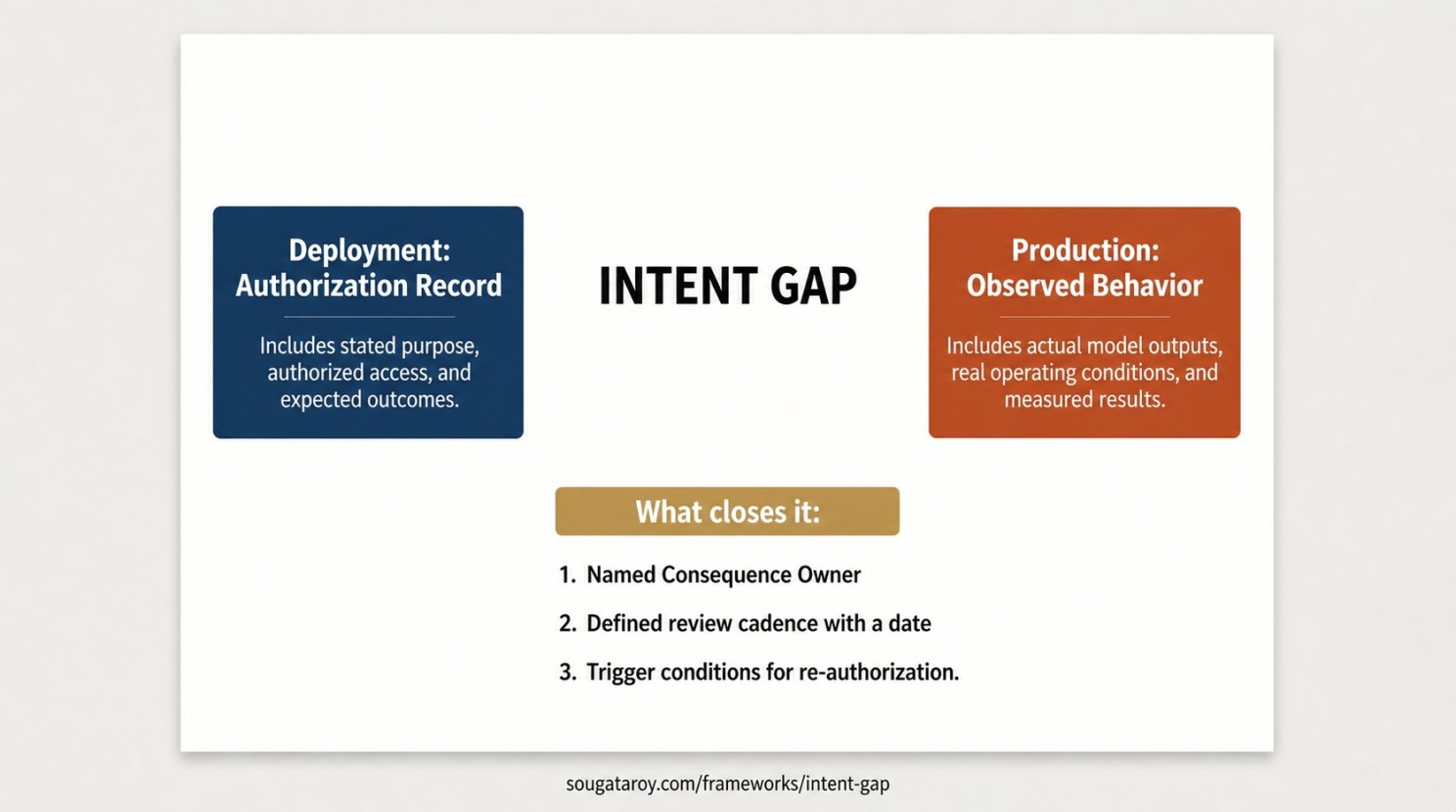

That space between what an organization formally documents an AI system will do and what it is actually doing in production is the Intent Gap. Upstart's version surfaced in credit conversion rates and a $20 million guidance revision. Most organizations will not receive the measurement that way. It arrives as an audit finding, a customer complaint that turns out to be the fourth this month, a board question with no documented answer, an incident report whose timeline does not align with any authorization record on file. The measurement surface varies. The underlying gap is the same.

Closing the Intent Gap requires three things that most governance frameworks do not have documented together in one place. The authorization record written before deployment: what the system was intended to do, what it was authorized to access, what outcomes it was expected to produce. Evidence of the most recent comparison between that record and observed production behavior, with a named reviewer and a date attached. And the trigger conditions that require the comparison to happen ahead of schedule: a shift in model behavior, a change in the operating environment, a version update to the model itself.

Agent 365 tells you which agents are running. Microsoft Purview tells you what they are accessing. What neither product generates for you is the comparison between what those dashboards show and the authorization record written before deployment. That comparison is a governance obligation. The absence of a named person formally accountable for running it is not a tooling gap. It is a design decision made by default, and it will eventually be surfaced as something else by someone whose involvement the organization did not select.

Upstart had the intent publicly documented in May. The company's own executives disclosed in November that internal visibility into what the model was doing during Q3 had existed before the earnings call. The piece the governance structure did not appear to produce was anyone formally required to put those two things side by side, on a defined cadence, and sign their name to the result before a filing deadline made it unavoidable.

In your organization, who is formally assigned to compare what your deployed AI systems are actually doing against the documented intent that authorized their deployment?